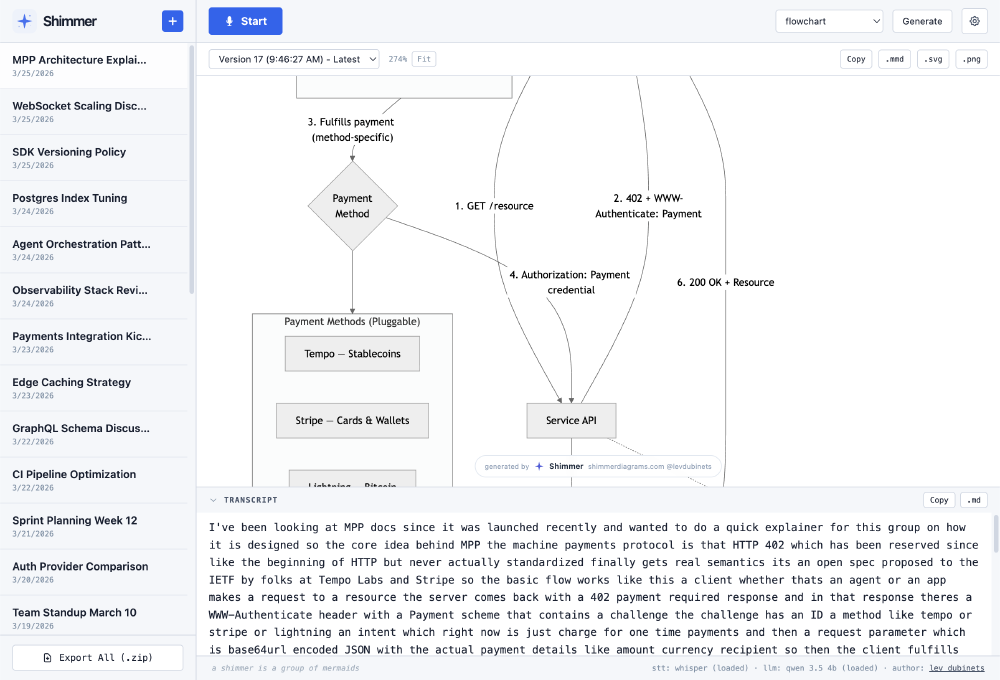

Shimmer: Live Diagrams for Your Meetings

I built Shimmer, a tool that listens to your meetings and generates diagrams in real-time.

It uses on-device speech recognition (Whisper or Voxtral Mini via WebGPU) to transcribe your meeting, then runs a 3-step LLM pipeline (also running in-browser with Qwen 3.5) to continuously generate and evolve Mermaid diagrams as the conversation unfolds. No audio or data leaves your browser by default, but you can plug in OpenAI or Anthropic API keys for higher quality LLM outputs.

The pipeline classifies what type of diagram fits the conversation, distills the transcript into structural elements, and generates valid Mermaid syntax, evolving the diagram incrementally as new discussion points come up. It supports flowcharts, sequence diagrams, mindmaps, timelines, state diagrams, ER diagrams, and class diagrams.

You can run it fully local with both STT and LLM on-device via WebGPU, zero data sent anywhere. Or, plug in OpenAI, Anthropic, or Ollama for diagram generation by bringing your own key. Sessions persist in IndexedDB with full transcript and diagram version history.

Check it out at shimmerdiagrams.com.

Thank you to Joshua Lochner for the excellent transformers.js library as well as the extremely useful transformers.js-examples code.